Meta-Programming

The boundary between describing a system and creating one is disappearing. The main artifact of software engineering is no longer code. It’s how you think.

How you structure an agent’s memory, specs, and feedback loops matters more than which model you pick. This site documents why, and what to do about it.

Evolution: From Vibing to Meta-Programming

The data landed before the theory. LinearB studied 8.1 million pull requests. The headline: AI-generated code ships faster but breaks more. More issues, longer review queues, lower acceptance rates. Developers feel 20% faster; tasks actually take 19% longer end-to-end. Creation accelerated. Verification didn’t.

The natural response is to wait for a better model. Stanford’s 2026 Meta-Harness study suggests that’s the wrong fix. They took the same model, changed only the scaffolding around it, and measured a 6× performance gap on the same benchmark. The model didn’t change. The process around it did, and that was the difference between failing and passing.

This is the evolutionary pressure. It runs in three stages.

Vibe coding is intent without understanding. You describe what you want, the agent produces something that looks right, you ship it. Fast, often wrong, occasionally catastrophic. This is where most teams discovered the LinearB pattern firsthand: creation accelerated, verification didn’t scale with it. The gap between “AI wrote it” and “AI wrote it correctly” isn’t closing on its own.

Agentic engineering is the correction. Agents make decisions: they call tools, branch on results, orchestrate other agents, run for hours. The engineer’s job shifts from writing code to designing the system that writes code. The pipeline, the verification gates, the handoffs. Microsoft’s 10-month Copilot study across 878 pull requests confirmed the architectural shift: “the bottleneck moved from typing speed to knowledge, judgment, and ability to articulate tasks.” That’s not a productivity finding. It’s a description of a new job.

Meta-programming is what comes next. If the bottleneck is articulation, and the verification problem is structural, then the engineering artifact is language itself: the specs, rules, and pipelines that shape agent behavior. You’re no longer writing programs. You’re writing the instructions that write the programs, and teaching the system to improve those instructions from experience.

The Thesis

Linguistic Meta-Programming (LMP) is self-improvement of a coding agent through linguistic feedback (specs, reviews, lessons, rules) without touching model weights.

The academic framing arrived independently. Tsinghua’s March 2026 NLAH paper (Natural-Language Agent Harnesses) built systems where harness behavior is externalized as “a portable executable artifact in editable natural language.” Their opening diagnosis: “Agent performance increasingly depends on harness engineering, yet harness design is usually buried in controller code.” Making it explicit and linguistic, not hard-coded, is the intervention. That’s precisely what LMP is.

Stanford’s DSPy arrived at the same idea from the optimization side: stop hand-writing prompts, search the language space automatically. Treat the prompt as code that can be tested, scored, and improved by the system itself. Same underlying claim: language is the parameter space, and it can be engineered.

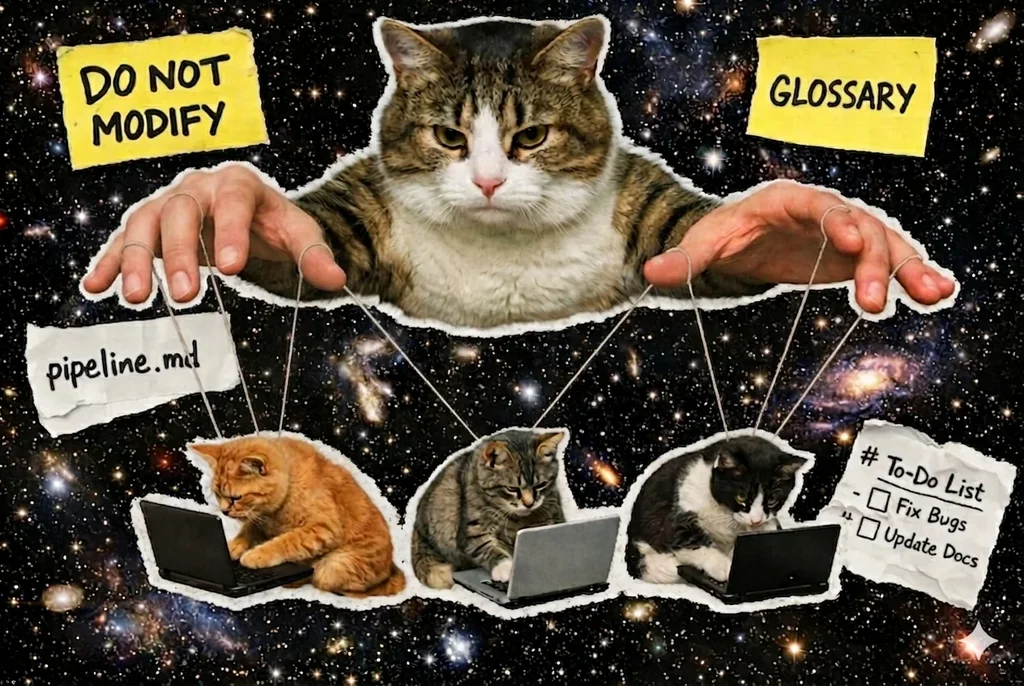

The practical consequence: natural language is code. A spec with a DO NOT clause is a constraint. A GLOSSARY is a type system. A LESSONS.md is a feedback loop. A pipeline definition is a program. The difference between a well-written AGENTS.md and a poorly-written one is the difference between a correct program and a buggy one. The compiler is just an LLM.

This convergence is measurable at scale. A Bamberg/Heidelberg systematic analysis of 2,926 repositories across Claude Code, GitHub Copilot, Cursor, Gemini, and Codex found independent convergence on the same pattern: linguistic configuration files (CLAUDE.md, AGENTS.md, COPILOT-INSTRUCTIONS.md) as the primary mechanism for shaping agent behavior. Pydantic took it furthest: they extracted 4,668 PR review comments and distilled them into roughly 150 AGENTS.md rules. Implicit engineering judgment, compiled into explicit agent instructions.

AGENTS.md now has 60,000+ repositories and Linux Foundation endorsement. Microsoft Research RiSE named “Intent Formalization” a grand challenge for 2026. AWS launched Kiro. A code review agent in production self-improves from pull request activity in real time, closing the loop that Layer 3 describes. The industry is converging on language as the primary engineering artifact. It hasn’t named what it’s converging on.

Three Layers

LMP has structure. Three layers, each dependent on the one below.

Layer 1: Persistent Model of Self

Not documentation. Not memory. A working model of what the agent knows, how it knows it, where it fails, and what constraints it operates under. This is what separates a generic model from a system shaped by a specific engineer’s context.

The performance difference is real. The ERL paper showed that agents operating with heuristics extracted from prior trajectories outperformed ReAct baselines by +7.8% on the Gaia2 benchmark. Their finding: “Heuristics provide more transferable abstractions than few-shot prompting.” Persistent structured knowledge outperforms in-context examples. The format matters.

We measured this directly. In a controlled A/B test, the same architectural problem ran through a generic Claude Sonnet instance and through an agent with a structured knowledge base. The generic agent asked for a code map. The KB agent flagged the exploration-versus-exploitation paradox, with evidence from prior sessions, before writing a line of code. The difference isn’t code quality. It’s the level of reasoning the agent brings before touching implementation. The broader practitioner community confirmed the pattern independently. Karpathy’s framing of personal KB-building via LLMs named the same mechanism: the KB is the primary artifact, not the code it produces.

Layer 1 explains why all production agent systems converge on human-readable markdown: AGENTS.md, CLAUDE.md, SKILL.md, DECISIONS.md, MEMORY.md. Markdown is version-controlled, readable by humans, parseable by agents, portable across model versions. Anthropic’s “Building Agents with Skills” organizes persistent behavioral configuration as composable markdown files rather than model fine-tunes. Not a coincidence. It’s the natural format for shared knowledge that both sides can read and edit.

Layer 2: Intent Form Selection

There’s no single canonical form for expressing intent to an agent. There’s a set of them, each with its own strengths: natural language for throwaway exploration, AGENTS.md for cross-session conventions, SKILL.md for reusable procedures, DO/DO NOT/GLOSSARY for boundary-sensitive tasks, hooks for non-negotiable gates. Layer 2 is the practice of picking the right form for the task, not inventing a new language.

The choice tracks three dimensions. Complexity: a one-line fix runs fine on natural language; a multi-file refactor doesn’t. Reliability requirement: a failing test before a release blocker belongs in a hook, not in a convention document agents follow 70% of the time. Horizon: a one-off exploration doesn’t need a spec; a project contract does. Getting this wrong in either direction costs: under-specified tasks fail the way Experiment 2 did (wandering scope, wrong intent); over-specified tasks hit the AGENTS.md cliff (context files past 500 lines measurably reduce success rates).

The constraint language (DO NOT, GLOSSARY, explicit acceptance criteria) is the form that most consistently moves outcomes. A 2026 controlled experiment quantified it: spec detail dropped task pass rates from 89% to 56% in single-agent setups and from 58% to 25% in multi-agent. A full spec alone at the merge point recovered the 89% ceiling. An AST-based conflict detector added zero. Specs do the heavy lifting; reconciliation infrastructure does not.

The academic framing arrived at the same answer from three directions. Microsoft Research RiSE named Intent Formalization a grand challenge, with a spectrum from lightweight tests to functional specs to DSL synthesis. Martin Fowler’s progression (spec-first → spec-anchored → spec-as-source) maps the maturity curve. A formal Context Engineering paper in April 2026 named five roles a complete context package needs to carry (Authority, Exemplar, Constraint, Rubric, Metadata) and measured that a structured package raised first-pass acceptance from 32% to 55%. DO/DO NOT/GLOSSARY handles three of those five; the other two (working code samples and explicit success criteria) are where most specs silently underperform.

The form-selection space now has reference implementations at both ends. At the small end sits the single-imperative — one line starting with must / do not / always / never, capped at 200 characters, behavior-specific rather than principled — used for cheap per-session post-mortem capture (lopi orchestrator, May 2026). At the large end sits the multi-section AGENTS.md or DO/DO NOT/GLOSSARY spec used for cross-session contracts. The decision tree narrows from “what forms exist” to “which size threshold favors which form” — a question with a tractable shape because both extremes have working examples to compare. 🟡

Layer 3: Closed Loop

The system observes what worked, extracts lessons, refines the model, improves the language. No weight updates.

Stanford’s Meta-Harness study proved this at the harness level: automated optimization searching configurations the same way gradient descent searches weights. The 6× performance gap between manual and optimized harnesses shows the scale of what’s available in the language space, without any model change.

A separate end-to-end pipeline run validated the approach concretely. Twenty-four files, 253 tests, zero regressions, $5.50 in API cost. Structured process (Scout → Spec → Plan → Worker → Reviewer → Lessons) beat raw context injection on cost, quality, and first-attempt pass rate. The Lessons stage is what makes the next run cheaper: the reviewer extracts structured findings, and those findings feed forward.

The compounding mechanism is what distinguishes Layer 3 from simple iteration. Each run narrows the gap between what you intend and what the agent produces. The knowledge base grows. The spec language tightens. Failure modes get documented before they recur. This is why LMP is a compounding return, not a one-time gain.

The closed loop has a known failure mode: noise accumulation. An unfiltered extractor degrades future retrieval because every weak lesson it writes lowers the signal-to-noise ratio of the KB it’s supposed to improve. The structural fix is a score-threshold gate at write time — a run scoring below some quality bar writes nothing, on the reasoning that a sub-threshold run isn’t informative enough to generalise from. One open-source orchestrator (lopi, May 2026) ships this as LESSON_QUALITY_GATE = 0.6, an explicit numeric threshold paired with the extraction step. Pattern is observable; the threshold value is calibration work specific to each retrieval setup. 🟡

The progression: from vibe coding to meta-programming

Structured process beat raw context injection on every measured dimension: cost ($6.63 vs $9.99 per task), quality, and first-attempt pass rate. Ten controlled A/B tests, same codebase, same task.

The three most recent experiments extended the picture. An edit tool investigation traced a persistent error pattern to our own extension, not the platform. Post-fix benchmark: 7.1% errors, all model mistakes, all self-recovering in one retry. A model evaluation found that thinking level acts as a compliance-to-conviction dial: higher thinking produces conviction (holding position under pushback) rather than compliance. A distinct mode emerged: the agent says no while providing the implementation anyway. Soft sycophancy. Not caught by standard benchmarks. A documented degradation incident confirmed that the structured pipeline resists provider-side quality shifts by design: when model reasoning degrades, the externalized plan compensates.

External evidence confirms the same structure: LinearB’s 8.1M-PR dataset, Stanford’s Meta-Harness results, Tsinghua’s NLAH paper, the Bamberg/Heidelberg repository analysis. All arrived independently. All confirmed the same structural conclusions. Every major claim is backed by evidence documented in our References.

Velocity scaled. Verification didn’t. Amazon mandated Kiro across 21K agents, laid off 30K reviewers, then hit 4 Sev-1 incidents in 90 days: a 6-hour outage, 6.3M lost orders.

Phase 3: Meta-programming. The agent’s operating context (memory, specs, rules, feedback loops) becomes the primary engineering artifact. Change the harness, not the model. Stanford proved this in March 2026: same LLM, different scaffolding, SOTA results. SWE-bench: custom scaffolding alone adds +10%. Same model underneath.

You can’t delegate your way to a better agent. You have to build the system around it that makes every session compound on the last.

Writing specs, structuring memory, and encoding feedback isn’t prep work you do before the real engineering starts. It is the engineering.

LMP formalizes this into three layers (next section). The industry is converging on the same idea without coordinating. 60,000 repos now ship AGENTS.md, a cross-tool standard for agent instructions under the Linux Foundation. GitHub’s spec-kit hit 79K stars: “the lingua franca of development moves to a higher level, and code is the last-mile approach.”

Academia named it. Microsoft Research: “Intent Formalization,” a grand challenge. Tsinghua’s NLAH went further: harness behavior externalized as a portable executable artifact, independent of the model underneath. DSPy treats prompts as learnable parameters and optimizes them automatically. Prompts as code. Literally.

Specification: DO/DON’T/GLOSSARY structure, Spec-Driven Development, Intent Formalization as a grand challenge. How language becomes contract.

Context Engineering: Token budgets, progressive disclosure, caching strategies. The physics of what the agent can see at once.

Pipeline: Scout → Spec → Plan → Worker → Reviewer → Lessons. How the six stages connect and why the order matters.

Verification: Separate reviewer agent, multi-model critique, OTel instrumentation. Why the verification layer can’t scale with the creation layer.

Self-Improvement: Memory architecture, lessons extraction, closed loop mechanics. How the system compounds without weight updates.

Principles: Six principles, three application levels, anti-patterns. The opinionated foundation everything else builds on.

Landscape: Tools, projects, papers, and trends as of April 2026. Where the industry is and where it’s going.